How Can We Ensure AI Integration Supports and Enhances Learning?

Summary

The paradox of learning is that we learn by doing what we can’t do yet

AI integration helps solve this paradox through scaffolding/extending by acting as a “more knowledgeable assistant”, or rather as a “flexible thought partner”

In addition to providing a blueprint for effective AI integration, this approach also helps us avoid AI misintegration

Introduction

For the past two years, we have been hearing and reading a lot about AI and the fact that it can “support and enhance learning” - as well as undermine it. These claims are often accompanied by examples but, as striking as they might be, these particular illustrations are not enough to derive a general explanation of why and how this might be the case—or not). Such an explanation is needed if we are to:

Develop an understanding of effective AI integration

Transfer its potential to new contexts

Help teachers avoid AI misintegration in the learning process

AI and Learning Scales

In a previous article, I explained how AI integration can be fine-tuned to leverage the potential of these technologies for learning, all whils mitigating their pitfalls. In this context, I proposed the idea of an AI Assessment Dashboard.

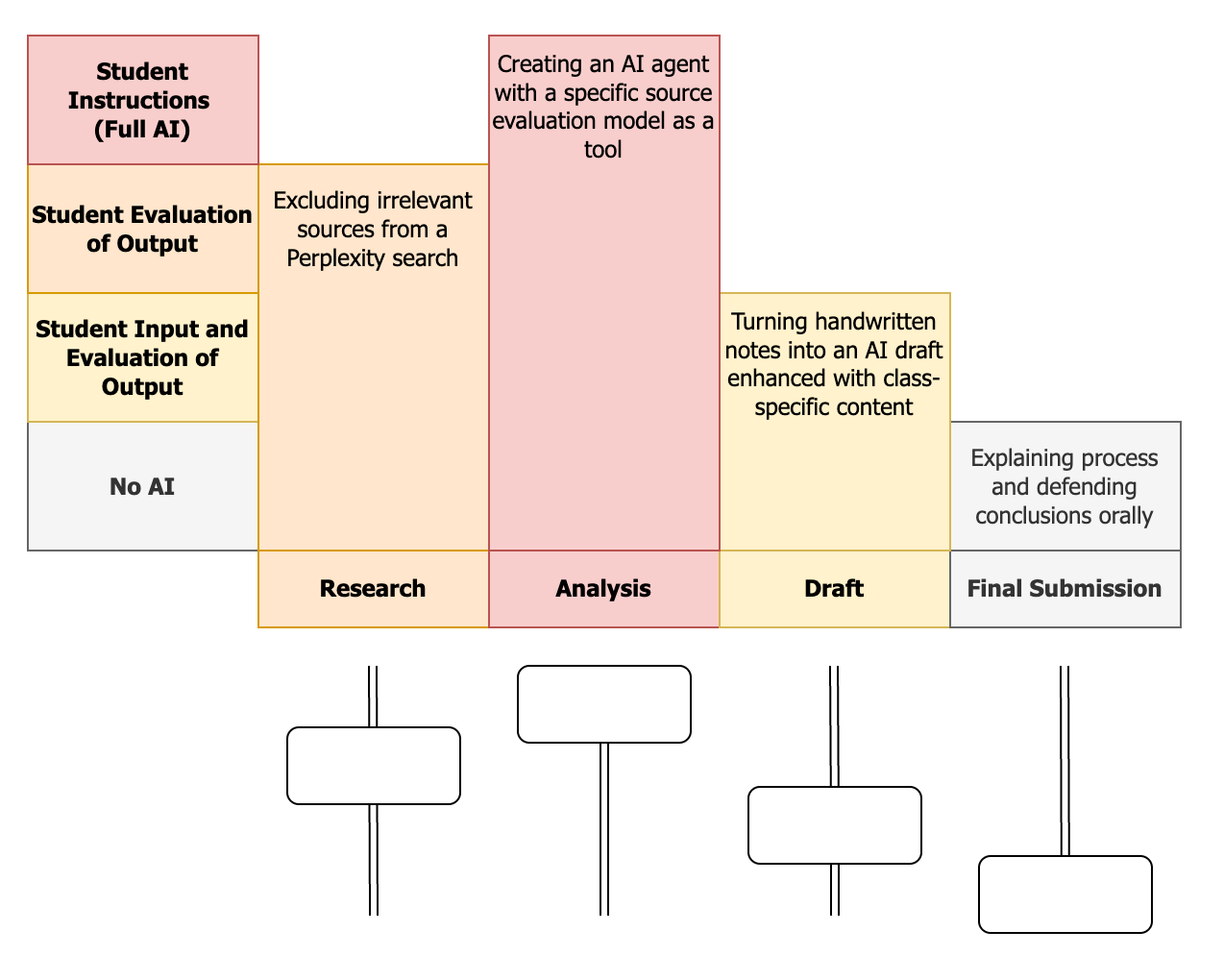

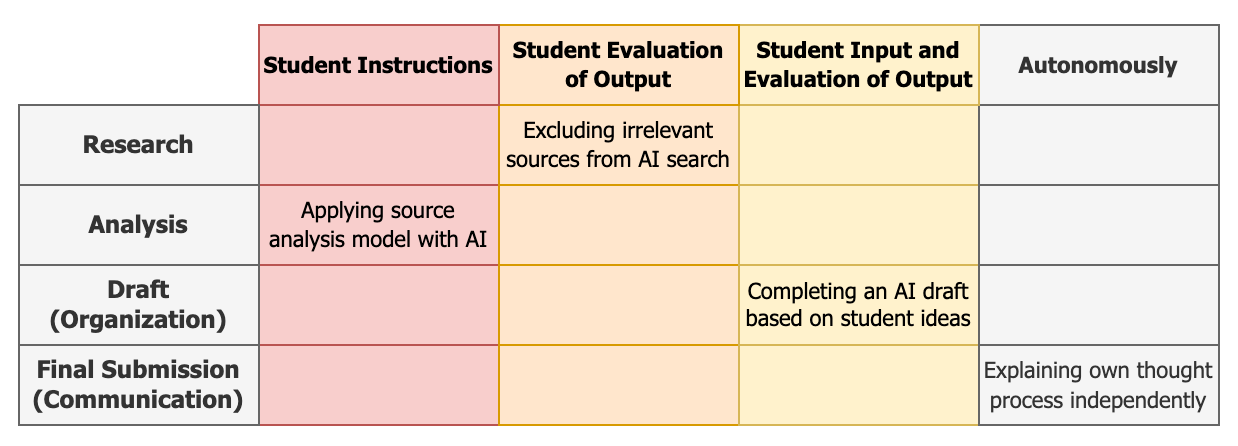

Contrary to an AI Scale such as the one critiqued here, an AI Dashboard does not describe an overall level of AI use in the final product of the student, but different levels of AI use at each step of the learning process.

More precisely, it defines specific competencies (learning objectives) that the student is expected to be able to demonstrate with specific degrees of AI assistance.

As such, an AI Dashboard is closer to a “learning scale” (Rinkema and Williams, 2018), i.e., a standards-based rubric describing in positive language what students can do at different levels of performance.

Such learning scales are usually organized by order of complexity of the internal skills demonstrated by the students, rather than by level of external help needed to do so. Here, however, AI assistance itself becomes a skill, as the Dashboard integrates AI competencies into standards-based rubrics, and thus into the curriculum.

As different as they are, these AI competencies can all be described as the ability to leverage AI technologies to support and enhance one’s learning.

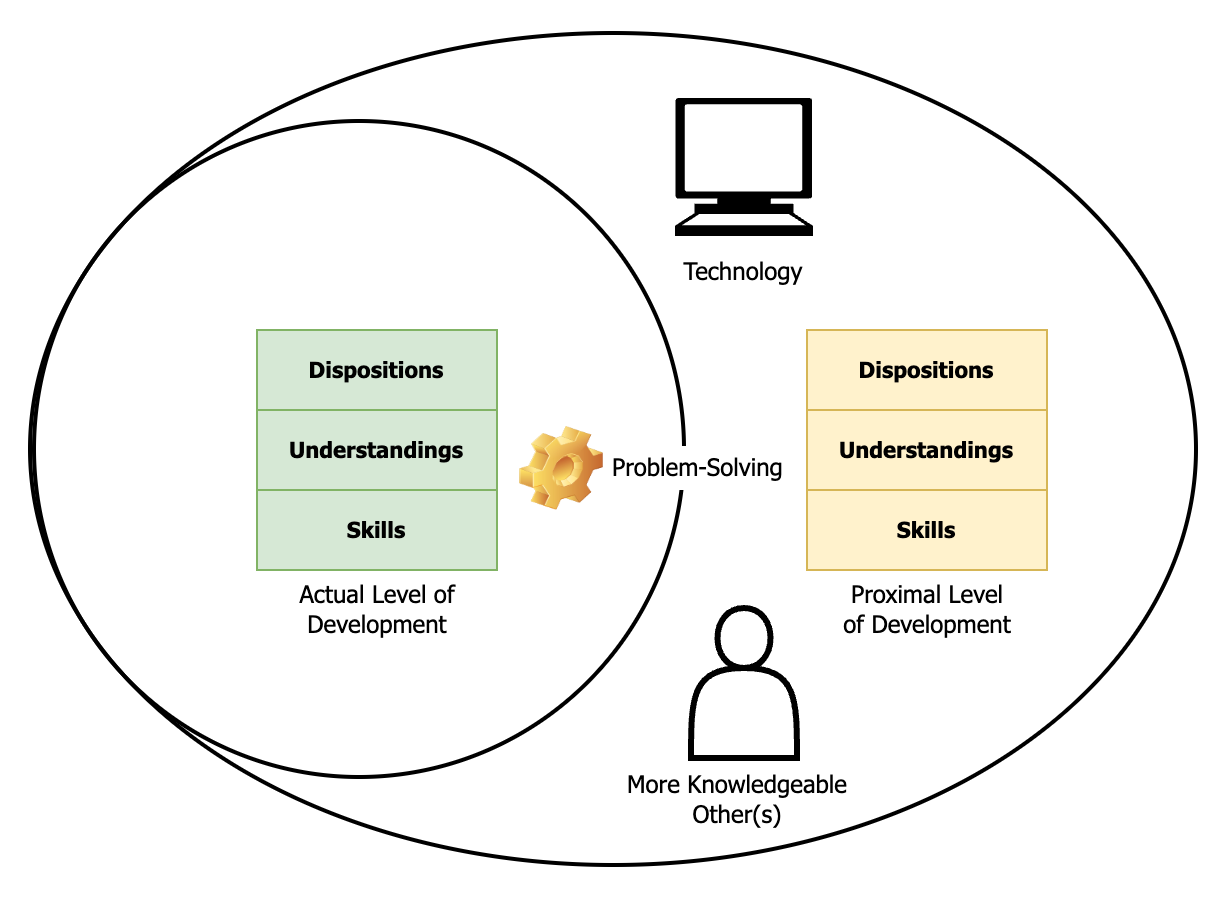

With this context in mind, it is easier to see how and why AI can support and enhance learning (or not). The diagram below helps visualize this process.

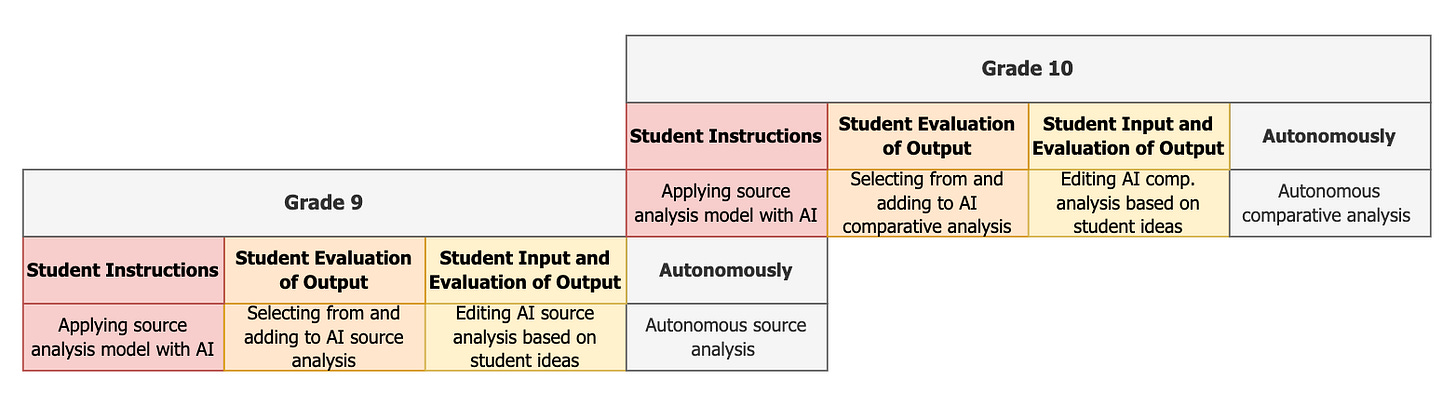

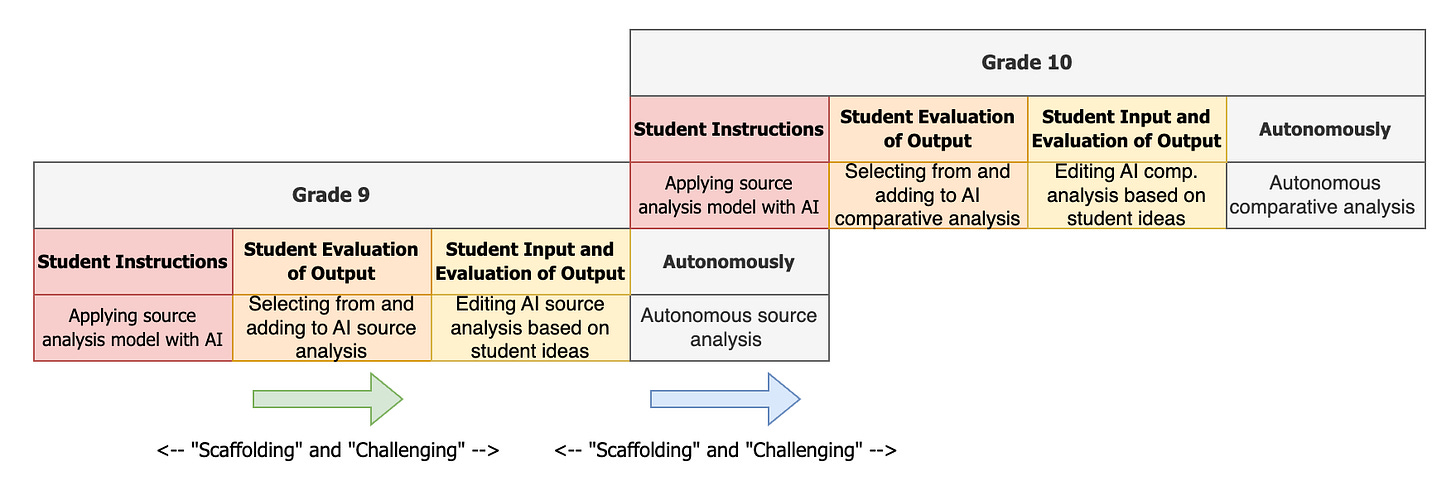

In learning scales, the competency targeted at a grade level becomes a prerequisite at the next grade level (Rinkema & Williams, 2018). In this example:

In Grade 9, the goal is for students to be able to analyze a source independently

To achieve this objective, they may start by simply prompting an AI assistant to apply a source analysis model for them - but, after a few intermediate steps, will eventually need to be able to do it themselves.

Once they are (and move on to Grade 10), this particular competency can be delegated to AI again - at least temporarily. Practicing the skill regularly will help maintain and strengthen it, but letting AI do the busy and “heavy work” will also free up cognitive resources to develop a new, more complex skill through focused and “fine work”: conducting a comparative analysis. This is what we could call AI Escalation.

The Paradox of Learning

The paradox of learning is that we learn by doing what we are learning to do, and thus can’t really do yet. This is where the ideas of “scaffolding” and “challenging” come from. They are often treated as opposites, because we tend to associate them with different types of learners: whatever learning objective is targeted at a particular point in time, there will be students for whom it is currently unreachable, so that they need “support” (scaffolding), and others who already have this competency and need to be further “challenged”. But “supporting” and “challenging” are actually the same thing.

In this example, if the current expectation is that students can analyze a source autonomously, letting students do so in collaboration with AI is a form of scaffolding and support, while letting them work with AI to conduct a comparative analysis is a form of challenge and learning enhancement. However, earlier in the year, when the expectation was that students could understand a source analysis (not perform it themselves), what is now a scaffolding (working with AI to analyze a source) was a challenging “extension”.

Whether I “support” or “extend” a student’s learning only depends on 1) my expectations at this point in time and 2) the current ability level of this student. The names are different, but the pedagogical strategies are the same.

AI and ZPD

What are these strategies? Arguably all teaching strategies are strategies to support and extend learning, and AI can help (directly or indirectly) make any of them easier to implement and/or more effective.

What they have in common is that they help solve the Learning Paradox by enabling students to “learn by doing what they can’t do yet”. And this is what AI can help with.

More precisely, as can be seen in the diagram above, which illustrates Vygotsky’s well-known “Zone of Proximal Development”, learning involves:

Moving from current or “actual” dispositions, understandings, and skills, to the next level up

This “proximal” level is defined as the one required to solve problems the child cannot solve on their own yet, but can learn to solve in the right environment, with the help of technology and under the guidance of more knowledgeable others

Unsurprisingly, this next level is reached by solving problems under these conditions - an environment that can be described as “scaffolding” or “extending” learning, depending on whether the point of reference is the current or the targeted level of development.

This model gives us a blueprint to analyze in a systemic way how AI integration can help support/enhance learning. AI can help develop new dispositions, understandings and skills by:

Modeling and explaining the process to the students

Breaking down the process into incremental steps and allowing the students to practice and master them one by one as the AI carries out the other ones for them

Providing hints and feedback to help the student close the gap between their actual and proximal level of development

Scaling this support/challenge up and down individually and responsively

Providing an engaging and encouraging environment combining relevant (aligned with preferences and choice) and authentic (simulating real-life situations) learning experiences, the right balance of challenge and success to induce “flow”, a sense of autonomy (through active problem-solving), and a feeling connectedness.

This last point might seem counter-intuitive. Isn’t there a trade-off between AI-integration and human interactions in the classroom? Not necessarily.

Although they are not human and are incapable of true empathy (or maybe for this very reason), AI interfaces can induce psychological safety. Real or not, students experience 1:1 attention and warm responses from an interlocutor that can remember and adapt to relevant information about them without ever judging them

An AI-rich classroom can free up time for teachers to interact with students individually or in small groups

Indeed, there is no reason why AI-powered learning activities cannot also be group-based and collaborative

Other T&L Strategies

If the “learning paradox” is solved thanks to technology and more knowledgeable others, then it should come as no surprise that AI integration can help. Indeed, AI is exactly that: a “more knowledgeable assistant”.

There are of course other teaching and learning strategies that are not captured by Vygotsky’s model (see this article by Dr. Shannon Doak). But AI integration is no less useful in those cases, and always for the same reason: it can imitate and automate human cognition, and be “programmed” to behave in specific ways through natural language.

Students learn through meta-cognition and thinking about their own thinking - which is exactly what they will do when “prompting” an AI assistant to follow very specific instructions.

Students learn by teaching others - which is also something AI can generate: a scenario in which the bot is the learner (see this example by Ryan Tannenbaum)

Students learn by critiquing others - which again is quite easy to set up with a “flawed-by-design” AI assistant (see this article by Pascal Vallet).

In that sense, AI can act, in an educational context, not only as a “more knowledgeable assistant”, but more generally as a flexible thought partner.

AI Misintegration

Yet, AI-integration can also miss the mark and become a hindrance. Discussions around AI misuse often focus on students “cheating” and bypassing the intended learning. However, teachers can misuse AI too. Not only if they fail to protect student privacy or overlook the limitations of the technology and use it for grading purposes without a human in the loop, but also if their integration of AI tools in the learning process misguided.

This is easier to understand and to avoid now that we have a blueprint for effective AI integration, as we can deduce that AI integration is ineffective when it does not support/enhance learning because:

It does not help students learn by doing, but outputs a product without engaging them at the right level in the process

It engages them in a process that is not aligned with the necessary learning objectives, but rather an end in itself

It undermines their interest, sense of autonomy and/or connectedness

It crowds out their effort and/or leads to learning loss (including in relation to other objectives)

It takes the place of alternative, potentially more appropriate teaching and learning strategies

Conclusion

The paradox of learning is that we learn by doing what we can’t do yet. In this regard, AI integration can help enhance learning by scaffolding proximal levels of skill development. Not only can it act as a “more knowledgeable assistant”, or rather as a flexible thought partner, but AI Escalation helps students develop increasingly complex levels of understanding and skills through a dialectical process:

Scaffolded practice — Mastery — Delegation — Scaffolded practice

In addition to providing a blueprint for effective AI integration, this approach also helps us avoid AI misintegration, which might happen whenever AI integration does not scaffold/extend learning overall.