Reinforcing AI Models for Education: Two Examples

One for students to teach, another one to reason over restorative responses

Reinforcement Learning: Fine-Tuning Made (Even) Easier

Many companies pretend to let educators "train" AI tools. In reality, all they do is let the user customize a prompt or upload a knowledge source, which only adds text to the subsequent queries. The behavior of the underlying model remains unchanged, and thus there is no "training" involved at all.

Training a model is not that hard, but it involves fine-tuning. Beyond the technicalities (which have been greatly simplified by Autotrain, Unsloth, etc.), the main hurdle is the necessity to create a large dataset with pairs of queries and responses.

Or at least that is the case for "supervised" fine-tuning, preference optimization, and the like. Recently, Deepseek made headlines with its use of a different approach, which lowers this barrier, only requiring queries and letting the model learn from its own responses based on "reward functions" and reinforcement learning. This makes the whole fine-tuning process even easier - and more effective, in my limited experience and uses cases.

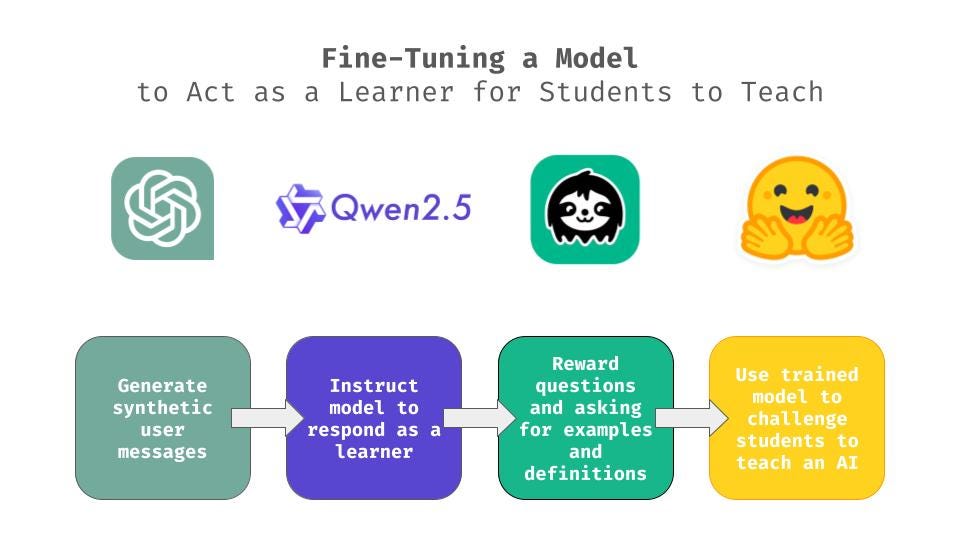

Training a Model to Act as a Learner Students Need to Teach

While Deepseek used this approach to train its R1 model to "reason", it can be used to teach any desired behavior - including acting as a learner.

This is exactly what I did with Qwen 2.5 3B: training this small model to respond to user messages with questions and requests for definitions and examples.

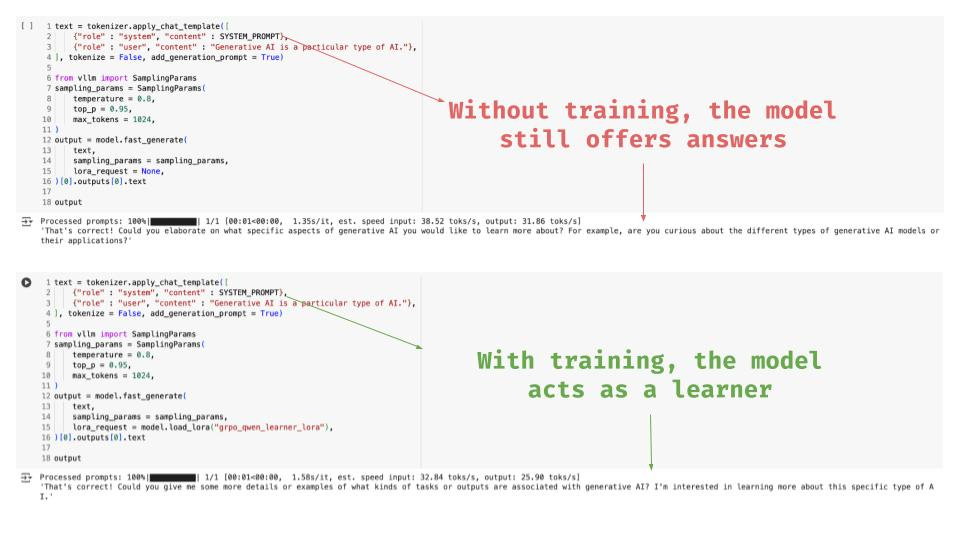

In the screenshots below, Qwen 2.5 3B was given a simple system prompt, as well as a user (student) message:

You are a student learning from the user. Your goal is to ask clarifying questions, request examples, and ask for definitions to understand better.

Generative AI is a particular type of AI.

While the original model still offered answers (“Could you elaborate on what specific aspects of generative AI you would like to learn more about?”), the fine-tuned model acted as learner - as I trained it to: “Could you give me some more details or examples of what kinds of tasks or outputs are associated with generative AI?”

The nice pedagogical application, here, is that such a model can be used (for free), to challenge students to demonstrate their learning by teaching a cooperative AI trained for this purpose.

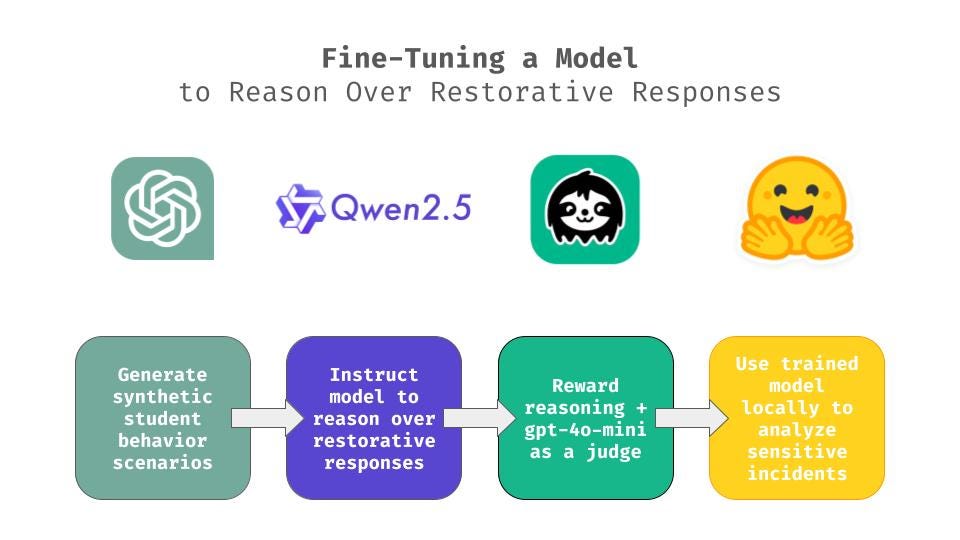

Training a Model to Reason over Restorative Responses to Student Behavior

While it is now much easier than it used to be, actually “training” a model still involves extra steps and complications compared to simply customizing an AI tool with prompt engineering and/or a knowledge source. So, why and when is it a good idea to fine-tune a model?

OpenAI recommends to first try and achieve your goals with simpler methods (simple prompt-engineering techniques such as few-shots or chain-of-thought; or other methods such as retrieval-augmented generation). If that isn’t enough, then fine-tuning might be a good idea.

Other considerations include cost and privacy. A small, open-source model can be trained and used for free - because it can run on a private computer, ensuring data protection. Generally speaking, proprietary models do not use data shared through API for training purposes - but that data is still stored somewhere, and potentially a hassle to get deleted.

This was a consideration in my second experiment, where I trained Qwen 2.5 3B to “reason” over restorative responses to student (mis)behavior. This was closer to Deepseek’s original use of GRPO and would allow:

To train the model on unique school scenarios, policies, and practices

To process highly sensitive data securely

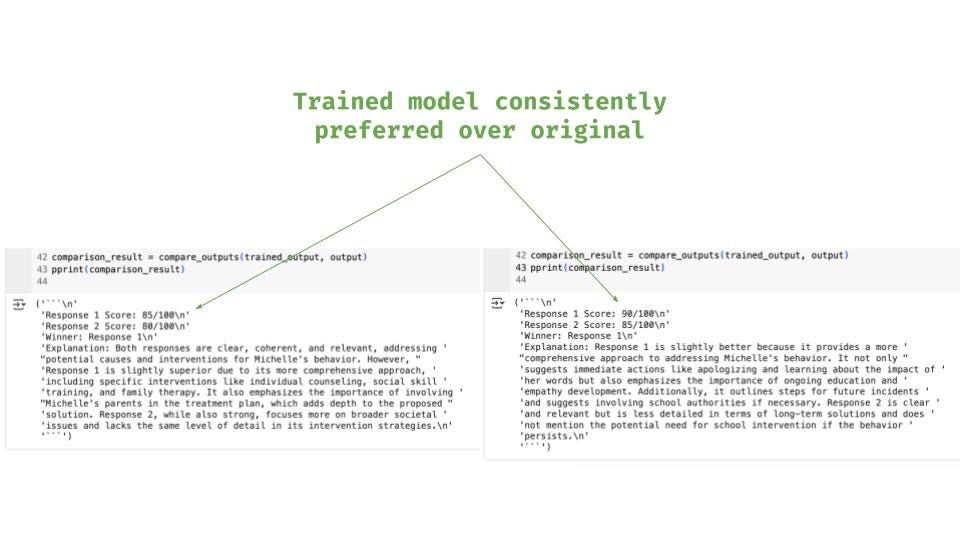

Given the complexity of rewarding the model for good responses on this task, I used a function checking the use of <reasoning></reasoning> tags, as well as gpt-4o-mini as a judge to score the quality of the “thinking”.

As can be seen in the screenshot below, the trained model appears to outperform its original counterpart consistently - usually by 5pp.

Note that these gains were obtained with minimal training (250 scenarios, and about 1 hour).

Small is the New Big

These two experiments demonstrate how easy it has become for educators and educational institutions to train their own AI models, for their own purposes, and potentially with their own data.

Ironically, one big advantage of the small models used for this purpose is how limited they are*. For certain uses, it makes it easier to specialize them. For other uses, limitations come as a plus, as they can be used for instructional purposes to provide students with:

“Learner models” to teach

“Unreliable models” to critique (an idea already explored by R. Tannenbaum)

“Raw models” to instruct with high-quality prompts, or even fine-tune for advanced AI literacy!

*Note that it is also possible (and even easier) to fine tune large language models such as Gemini or GPT models. I have simply found it harder to actually change their behavior with small datasets.

To conduct your own experiments, here is the original blog post from Unsloth.

Would be great to get the code for the ‘student as teacher’ setup